Workload-Driven CPU Selection: Virtualization, AI, HPC, and Databases

Virtualization & Cloud Workloads: Core Count, PCIe Lanes, and I/O Throughput

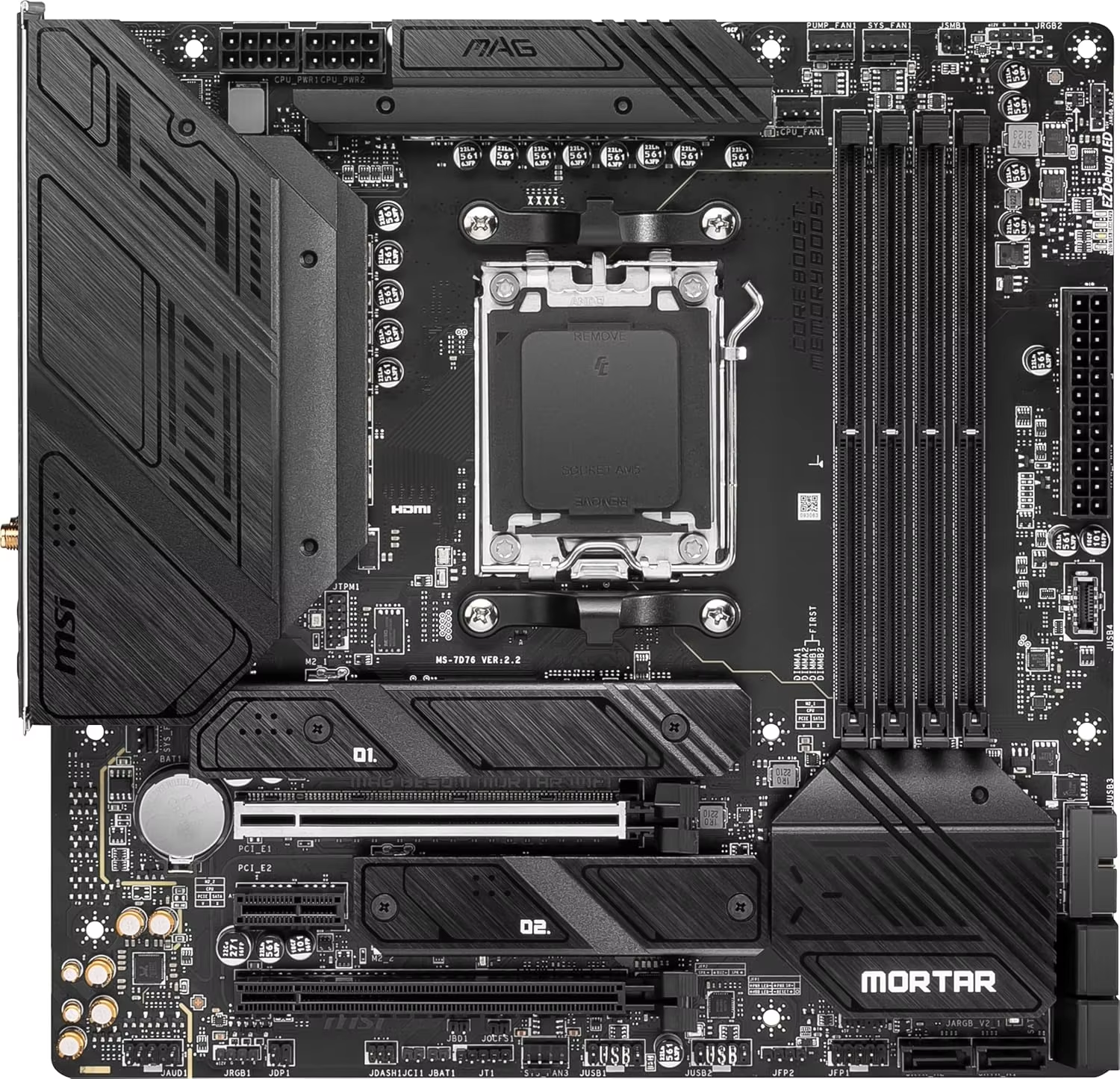

When it comes to picking CPUs for virtualization and cloud setups, there's a real need to find that sweet spot between how many cores we get and what kind of input/output capacity they offer. More cores definitely help pack more virtual machines onto one physical host since each VM needs its own set of processing threads to run smoothly. But here's where things can go wrong if we're not careful. Just having lots of cores isn't enough if the motherboard doesn't have enough PCIe 5.0 lanes running through it. Most modern hypervisor platforms actually require at least 128 lanes to handle both fast NVMe storage systems and GPU connections at the same time. Without proper I/O bandwidth, users will notice those annoying latency issues popping up whenever they try moving VMs around. And let's not forget about memory channels either. Going with an 8-channel setup makes all the difference when running heavy database applications together with regular computing tasks because it stops different processes from fighting over limited resources.

AI & HPC Workloads: Single-Thread Latency, Memory Bandwidth, and FP64/INT8 Acceleration

When it comes to AI training and those heavy duty HPC workloads, they actually put different kinds of pressure on CPUs. Parallel processing definitely makes good use of multi-core setups, but there's still this whole other issue with single-thread latency that matters a lot for preprocessing steps. Take BERT models for instance - if each core takes longer than 3 nanoseconds to respond, batch processing slows down by about 22%. And don't get me started on memory bandwidth. The difference between systems is staggering. Run some HPC simulations and watch what happens: those machines with 850GB/s bandwidth can crunch through fluid dynamics calculations twice as fast compared to ones stuck at 400GB/s. Specialized FP64 units really help out with scientific modeling tasks, whereas INT8 instructions are great for making inference workloads run smoother. Manufacturers who skip these features will find their AI training taking around 40% longer based on MLPerf tests. That kind of time penalty adds up fast in research environments where every hour counts.

Transactional Databases: Why ECC Stability, Cache Size, and Memory Latency Matter More Than Core Count

When it comes to transactional databases, stability takes precedence over sheer speed. ECC memory plays a critical role in stopping those sneaky data corruptions we never see coming. Just think about what happens when a single bit flips in memory storage. According to some research from Ponemon back in 2023, this kind of error can lead to massive recovery expenses, somewhere around $740,000 or so. Big L3 caches with at least 60MB capacity help cut down on wait times because they keep commonly used data right there on the chip itself. This makes OLTP queries run roughly 30% faster than systems with smaller caches. And here's something interesting nobody expects: throwing in too many processor cores actually slows things down. Testing with MySQL showed that computers with 32 cores had transactions taking about 15% longer to commit compared to machines with just 24 cores, all thanks to those pesky NUMA issues. For anyone dealing with real time analytics, getting memory response times under 80 nanoseconds matters way more than simply counting how many cores are sitting inside the processor.

Creative & Technical Professional Workloads: Rendering, Video Editing, and Simulation

3D Rendering & Scientific Simulation: Threadripper Pro vs. Xeon W vs. EPYC Performance Realities

Creating high quality 3D renders and running complex scientific simulations really pushes hardware to its limits when it comes to parallel processing power. Workstation processors need to strike a delicate balance between how many cores they pack in and how fast data moves through memory. The AMD Threadripper Pro stands out here with its impressive 64 core setup and support for four channels of DDR5 memory. For those working on simulations involving finite element analysis, maintaining solid FP64 performance is critical. The EPYC processor's 12 channel memory design cuts down on bottlenecks by around 43% compared to systems with just eight memory channels. When it comes to ray tracing tasks, Threadripper Pro has an edge thanks to its bigger L3 cache pools. Meanwhile, Intel's Xeon W series still holds ground in single threaded CAD applications where responsiveness matters most. Most physics based rendering software scales pretty directly with the number of cores available, which means going beyond 32 cores becomes almost necessary if artists want to cut down render times from several hours to just minutes. Thermal management remains a big concern too. During long computational fluid dynamics runs, heat buildup can seriously limit what these powerful systems can do over time, so liquid cooling isn't just nice to have anymore, it's practically required for serious workstation setups.

Video Editing & Encoding: Quick Sync, AVX-512, and Unified Memory Architecture Impact on CPU Choice

Most video editing setups these days really focus on getting smooth real time previews while also speeding up those long export processes. Take Intel's Quick Sync tech for instance it actually lets GPUs handle H.265 encoding work, which means exporting 4K timelines takes about 70% less time than just relying on software rendering alone. When working with complicated color grades and those fancy LUTs, the AVX-512 instructions found in Xeon W processors can crunch through massive amounts of color data at once, processing full 512-bit chunks each cycle. The unified memory architecture becomes super important too, especially when dealing with huge 8K RAW files. This setup basically removes all that annoying lag that happens when data needs to bounce back and forth between different memory areas. And here's something workstation builders might want to keep in mind...

- Dual CPU configurations rarely benefit video editing due to NUMA latency

- H.266/VVC codec workflows require hardware acceleration support

- 128GB+ DDR5 ECC memory prevents dropped frames during multi-cam editing

ProRes RAW workflows demand sustained memory bandwidth exceeding 100GB/s—a key metric where Threadripper Pro’s PCIe 5.0 lanes outperform competitors.

Enterprise-Grade CPU Features That Ensure Reliability and Security

ECC Memory, Hardware-Based Security (AMD SME / Intel SGX), and Firmware Validation

For enterprise workstations, the CPU needs special features to stop data from getting corrupted or falling victim to security threats. Take ECC memory for instance it finds those pesky bit-flip errors when processing data. This matters a lot in fields like financial modeling or genomic research where even one wrong calculation can throw everything off track. Then there are these hardware security measures such as AMD's memory encryption and Intel's secure execution environments. They basically put up walls at the hardware level to keep malware out without slowing things down too much. The firmware also plays its part by checking if everything boots correctly every time the machine starts up, which stops people from messing with the BIOS settings. When all these tech elements work together, they create what some call a three-pronged defense system for businesses needing rock solid stability. Real world tests show around a 35-40% drop in crashes during heavy memory usage tasks, plus it helps companies stick to regulations in highly controlled sectors.

AMD vs. Intel CPU Comparison for Enterprise Workstations

Core Count Trade-offs: When High-Core CPUs Reduce Responsiveness in Interactive Workloads

While high-core-count processors deliver exceptional throughput for parallelized tasks like rendering or scientific computing, they often compromise responsiveness in interactive workloads. Real-time applications—such as live data visualization, CAD manipulation, or financial modeling—require low-latency single-thread performance rather than raw core density. When core counts exceed 24–32, several bottlenecks emerge:

- Scheduling overhead: OS thread management introduces latency as tasks shuffle between cores

- Thermal constraints: Aggressive multi-core boosting triggers throttling, reducing per-core speeds

- Memory contention: More cores competing for RAM bandwidth increase access latency

Benchmark data reveals that 64-core processors can exhibit 15–30% slower response times than 16-core equivalents in interactive scenarios. For enterprise workstations handling mixed workloads, a balanced 16–24 core configuration typically optimizes both parallel processing and user-facing responsiveness—avoiding diminishing returns where additional cores idle while critical foreground tasks stall.

Table of Contents

-

Workload-Driven CPU Selection: Virtualization, AI, HPC, and Databases

- Virtualization & Cloud Workloads: Core Count, PCIe Lanes, and I/O Throughput

- AI & HPC Workloads: Single-Thread Latency, Memory Bandwidth, and FP64/INT8 Acceleration

- Transactional Databases: Why ECC Stability, Cache Size, and Memory Latency Matter More Than Core Count

- Creative & Technical Professional Workloads: Rendering, Video Editing, and Simulation

- Enterprise-Grade CPU Features That Ensure Reliability and Security

- AMD vs. Intel CPU Comparison for Enterprise Workstations